The Nine Best Seasons Under 70 RBI

By now I’m sure you’ve seen our series explaining why RBI is not a good statistic for measuring individual value. The reasons are simple. RBI is simply too dependent on the quality of the team around you to be a good measure of individual value because the number of baserunners, location of baserunners, and number of outs are outside of a player’s control. To catch you up, we’ve already seen:

- How RBI can mislead you when comparing two players

- How you can have a lot of RBI during a bad season

- And how RBI don’t even out over the course of a career

Now, let’s turn the question on its head. Below you’ll find the best seasons since 1920 (when RBI became an official stat) in which a player had fewer than 70 RBI while also having 600 or more plate appearances. In other words, these are players who had a full season of at bats, played great, and didn’t have many RBI. The ranking uses wRC+ (what’s wRC+?) which is an offensive rate statistic that compares a player to league average and park, meaning that you can use it to compare across eras. 100 is average and every number above or below is a percent better or worse than league average.

| Rank | Season | Name | Team | PA | RBI | AVG | OBP | SLG | wRC+ |

| 9 | 1938 | Arky Vaughan | Pirates | 650 | 68 | 0.322 | 0.433 | 0.444 | 150 |

| 8 | 1993 | Rickey Henderson | – – – | 610 | 59 | 0.289 | 0.432 | 0.474 | 151 |

| 7 | 1968 | Pete Rose | Reds | 692 | 49 | 0.335 | 0.391 | 0.470 | 151 |

| 6 | 1975 | Ken Singleton | Orioles | 714 | 55 | 0.300 | 0.415 | 0.454 | 152 |

| 5 | 1987 | Tony Gwynn | Padres | 680 | 54 | 0.370 | 0.447 | 0.511 | 153 |

| 4 | 1974 | Rod Carew | Twins | 690 | 55 | 0.364 | 0.433 | 0.446 | 153 |

| 3 | 1968 | Jimmy Wynn | Astros | 646 | 67 | 0.269 | 0.376 | 0.474 | 159 |

| 2 | 1974 | Joe Morgan | Reds | 641 | 67 | 0.293 | 0.427 | 0.494 | 162 |

| 1 | 1988 | Wade Boggs | Red Sox | 719 | 58 | 0.366 | 0.476 | 0.490 | 167 |

What you can see from this list is that these are excellent seasons and none of them gathered more than 68 RBI. Let’s put this in the modern context. In 2012, only 8 players had a wRC+ of 150 or better. Cano, Encarnacion, Fielder, McCutchen, Braun, Posey, Cabrera, and Trout. On the other hand, 80 players had 70 or more RBI in 2012. Among them were Alexei Ramirez who had 73 RBI and a 71 wRC+ and Delmon Young who had 74 RBI and a 89 wRC+.

Usually big RBI numbers and high wRC+ go hand in hand, but there is a lot of variation that obscures the results. Good hitters usually have a lot of RBI, but not always. You’ve seen it in our previous posts on the subject and now you can see that great seasons don’t guarantee you anything in terms of RBI.

RBI isn’t the worst statistic in the world, but it just isn’t a good way to measure individual value when you consider how some players can have 100 RBI in a year and be 25% below average and some can have fewer than 70 RBI and be 50% better than league average. These numbers don’t even out over an entire career and you can’t use RBI to compare two players.

There isn’t a lot RBI can tell you about individual players. You can be good and not have them, you can be bad and have them, and this isn’t about small samples. RBI describe what happened on the field, but they are a blunt and unhelpful tool in measuring individuals. It’s time to move forward and stat lining up our valuations with better measures like wOBA, wRC+, and wRAA. If you use RBI to measure players, you going to end up thinking Ruben Sierra’s 1993 season in which he had 101 RBI is better than Rickey Henderson’s 1993 in which he had 59 RBI when in reality Sierra was 20% below average and Henderson was 50% below average. That’s way too big a mistake to make when there are much better alternatives.

RBI Are Misleading Even Over Entire Careers

In keeping with the recent theme, I’d like to take another look at RBI as a statistic. Recently, I’ve shown you why RBI can be misleading when comparing two players’ value and why having a lot of RBI doesn’t necessarily mean you had a good season. To catch up on these and other similar posts about baseball statistics, check out our new Stat Primer page.

Today, I’m turning my attention to RBI over entire careers. You’ve seen already that RBI aren’t a good way to measure players in individual seasons, but we’ve yet to see how well they do at explaining value in very large samples. The answer is not much better.

To evaluate this, I took every qualifying player from 1920 (when RBI became and official stat) to 2013 (2,917 in all) and calculated their career RBI rate by simply taking their RBI/Plate Appearances. This will allow us to control for how often each player came to the plate so Babe Ruth’s 10,000 PA can go up against Hank Aaron’s nearly 14,000. Next, I compared that RBI Rate to wRC+ (what’s wRC+?) which is a statistic that compares offensive value to league average while controlling for park effects. The simple explanation is that wRC+ is a rate statistic that controls for league average, meaning that a 110 wRC+ means the same thing in 1930 as it does in 1980. League average is 100 and every point above or below is a percent better or worse than league average in that era.

The results aren’t great for RBI as an individual statistic. Overall, the adjusted R squared is .4766 which means that about 48% of the variation in wRC+ can be explained by variation in RBI Rate. Put simply, players who have more RBI per PA are better hitters on average than those with fewer, but there is a lot of variation that isn’t explained by RBI Rate meaning you can’t just look at RBI and know how good a player was.

What this graph is showing you is quite striking. First, notice how many players have similar RBI Rates who have wildly different wRC+ and second notice how players with the same wRC+ have wildly different RBI Rates. Generally more RBI mean you’re better, but there’s a lot left unexplained by this stat.

Like I’ve said before, RBI isn’t a made up stat that is useless like wins for a pitcher because RBI reflects a real event on the field and is critical for score keeping. The problem with RBI is that it is too dependent on context and the team around you. Two players who are equally good on offense can have very different RBI Rates because they have a different number of opportunities to drive in runs. Similarly, players who drive in the same number of runs may be much different offensive players in terms of quality.

Even if you’re someone who thinks clutch hitting is a predictive skill, surely you can recognize that RBI is extremely context dependent. Your RBI total depends on how good you are, but also how many runners are on base, how many outs there are, and where the runners are positioned on the bases – all of which you have no control over as a hitter.

I’m on the front lines of the #KillTheWin movement, but I don’t think we should kill the RBI. The RBI just needs to be put in proper context and understood as a descriptive stat and not a measure of player value. Miguel Cabrera gets a lot of RBI, partially because he’s awesome, but also because his team gets on base in front of him all the time. Driving in runs is an important part of winning, it just isn’t an individual statistic. It’s a team statistic and we should view it as such.

You’ve seen that RBI can mislead you when comparing two players, that bad players can have a lot of RBI, and now you’ve seen that this isn’t something that evens out over time. RBI is simply not a good way to measure individual value when it can tell you the wrong thing this much of the time. There are better ways to measure the same concepts like wOBA, wRC+, and wRAA. Feel free to click on the links to learn more and check back for more on why you should put less stock in RBI.

RBI Is A Misleading Statistic: A Case Study

One of our missions here at New English D is to help popularize sabermetric concepts and statistics and diminish the use of certain traditional stats that are very misleading. If you’re a return reader, you’ve no doubt seen our series about the pitcher win:

- The Nine Best Seasons Under 9 Wins

- The Nine Worst 20 Win Seasons

- Comparing Wins Over Entire Careers

- A Case Study from 2012 about Wins

- 12 Assorted Facts Regarding Wins

I encourage you to read those posts if you haven’t already, but I’m confident in the case I’ve laid out. Wins aren’t a good way to measure pitchers’ performance and I’ll let those five links stand on their own. Today, I’d like to move forward and pick up the mantle with another statistic that is very misleading based on how it is currently used: Runs Batted In (RBI).

I’ll have a series of posts on the subject, but I’m going to start with a case study in order to explain the theory. RBI are a bad statistic because they are a misleading measure of value. Most people consider RBI to be really important because “driving in runs” is critical to success, but RBI is very much dependent on the performance of the other players on your team. A very good hitter on a bad team will have fewer RBI than a good hitter on a good team because even if they perform in an identical manner, the first hitter will have fewer chances to drive in runners. Even if they have the same average, on base, and slugging percentages overall and with runners on and with runners in scoring position. The raw number RBI is a blunt tool to measure the ability to drive in runs.

Factors that determine how many RBI you have outside of your control are the number and position of runners on base for you, the number of outs when you come to the plate with men on base, and the quality of the baserunners. If you get a hit with runners in scoring position 40% of the time (a great number) but there are just 100 runners on base for you during a season, you will get no more than 40 RBI. If you get a hit 40% of the time and have 400 runners on base for you during a season, you could have 100 RBI. That’s a big difference even if you perform in the same way.

I’m not making the case here that RBI is completely meaningless and that hitting with runners on base is exactly the same as hitting with the bases empty, but simply that RBI as a counting stat is very misleading. Even if you think the best hitters are the guys who get timely hits and can turn it up in the clutch, you surely can appreciate that certain guys have different opportunities to drive in runs. RBI is very dependent on context and that means it’s not a very good way to measure individual players.

Allow me to demonstrate with a simple case study. Let’s start with comparing two seasons in which the following two players both played the same number of games.

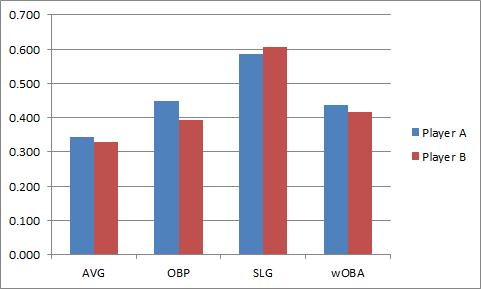

As you can see, Player A leads in average, OBP, and wOBA (what’s wOBA?) and is just a but behind in slugging. In wRC+, Player A leads 177 to 166 over Player B. If we take a look at BB% and K%, Player A looks much better.

All in all, Player A is the better player. We’ve looked at all of their rate stats and we’ve looked at wRC+ which controls for league average and park effect. It’s hard to argue that Player B is better. I couldn’t make a case to that effect.

Here’s the big reveal which some of you have probably figured out. Player A is Miguel Cabrera in 2011, Player B is Miguel Cabrera in 2012. This is the same player during two different seasons. In 2011, when Cabrera was clearly the better player, he had 105 RBI. In 2012, when he was worse, he drove in 139. Everything tells us he was better in 2011 except RBI. That should make use skeptical. It’s even more of a problem when you consider his situational hitting.

The graphs below are on identical scales:

Cabrera was better in 2011 in every situation and by each statistic except for his average (very close) and slugging percentage with no one on base. Which tells you nothing about how well he drives in runs. If you look at the HR distribution it tells you the same story.

| HRs | 2011 | 2012 |

| Bases Empty | 14 | 27 |

| Men on Base | 16 | 17 |

| Men in Scoring | 10 | 9 |

We can give him credit for those solo HR RBI from 2012, so let’s just lop 13 off the top. That still leaves 2012 Cabrera with 21 more RBI than 2011 Cabrera. Cabrera had a better season in 2011, but he had fewer RBI than in 2012. Most of this can simply be explained by the Tigers’ team OBP in the two seasons and where he hit in the lineup. If you subtract out Cabrera the Tigers got on base about 32% of the time in 2011 and 32.4% of the time in 2012 while Cabrera got to the plate a little less often because he hit 4th instead of 3rd. So there are more baserunners in general in 2012, but we can break this down even further.

In sum, Cabrera actually had more runners on base for him in 2011 than in 2012 but that doesn’t really tell the whole story. Let’s break it down by the number of baserunners on each base when he came to the plate:

| 2011 | 2012 | |

| Runner on 1B | 235 | 212 |

| Runner on 2B | 150 | 146 |

| Runner on 3B | 74 | 86 |

This should tell you the story even better. Cabrera had more baserunners in 2011, but the baserunners in 2012 were more heavily slanted toward scoring position. Cabrera had more runners closer to the plate so it’s easier to drive them in.

I intentionally chose Cabrera for this example because it strips away the idea that a given player just “has a knack” for driving in runs. Cabrera is an “RBI guy” if you subscribe to that idea. Miguel Cabrera had a better season in 2011 than 2012 when you break it down overall and in contextual situations. The only thing that helped 2012 Cabrera accumulate more RBI is that he had more runners on base closer to home when he got there. He played no role in getting those runners on base or closer to home, but he was able to more easily drive them and get credit in the RBI column. This is also isn’t as simple as converting RBI into a simple rate stat because where the baserunners are located and how many outs there are matter too, not just the number of situations.

This is the first step in a longer conversation but the takeaway point here is that RBI is stat that depends a lot on the team around you. Cabrera can’t control how many runners get on base and where they are on the bases when he comes to the plate. We shouldn’t judge a player for where he hits in the lineup and how the rest of the hitters on the team perform. It’s important to hit well with runners on base. I personally think we overvalue that skill over the ability to hit well in general, but I’ll leave that alone for now. Can we at least agree that a player who hits better with runners in scoring position and overall should be considered the better hitter? If that’s the case, then RBI is misleading you as an individual statistic. It’s that simple. I’m going to start laying out more evidence over the next couple weeks so stay tuned, but I’ll leave with this.

RBI is a descriptive statistic. It tells you who was at bat when a run scored and is critical to keeping track of a game in the box score. That’s why it was invented in the 1920s. You want to be able to scan a scorecard and recreate the game. RBI has a place in baseball, but only as a descriptive measure, not as a measure of value. Yet the RBI is still critical to MVP voting, arbitration salaries, and overall financial health of the players. They are judged by a statistic that doesn’t measure individual value and it is bad for their psyches. Players should focus on stats they can control and RBI isn’t one of those. It doesn’t measure individual value because as you can seen, in this very controlled example, RBI is misleading you.

12 Other Reasons To Kill The Win

Over the last few weeks I’ve been breaking down reasons to ignore the pitcher win and I think the case is pretty airtight. First I gave you the 9 best seasons under 9 wins, then I gave you the 9 worst 20 win seasons, and showed you that wins do not even out over a career. Finally, I presented a case study in wins using Cliff Lee and Barry Zito’s 2012 season. The evidence is clear, wins do not reflect individual performance and shouldn’t be used as such. But if you’re not convinced, read this and tell me what you think (all numbers for starting pitchers from 2013 entering 6/13):

- A pitcher has gone 6+ IP and allowed 0 ER and not earned a win 68 times.

- If you lower that to 5+IP and 0 ER, it goes up to 82 times.

- A pitcher has gone 6+ IP and allowed 4 or fewer baserunners and not earned a win 50 times.

- A pitcher has gone 6+ IP, allowed 4 or fewer baserunners AND allowed 0 ER and not earned a win 20 times.

- A pitcher has gone 8+ IP and allowed 1 or fewer ER and not earned a win 23 times.

- A pitcher has gone 8+ IP and allowed 1 or fewer ER and earned a LOSS 4 times.

- A pitchers has gone 5 IP or fewer and allowed 10 or more baserunners and earned a win 29 times.

- A pitcher has gone 6 IP or fewer and allowed 5 ER or more and earned a win 12 times.

- A pitcher has allowed 6 ER or more an earned a win 7 times.

- A pitcher has walked 6 or more batters and earned a win 9 times.

- A pitcher has allowed 12 baserunners or more and earned a win 23 times. Only two of them went 7 or more innings.

- A pitcher has gone 7+IP with 10+ K, 2 or fewer BB, and 3 or fewer ER and not earned a win 28 times.

So let’s review. You can have a great season and win fewer than 9 times. You can have a below average season and win 20. You can have a much better career than another pitcher and finish with the same winning percentage. A pitcher can dramatically out pitch another and have way fewer wins in a season. And finally, the above 12 things can happen…before the All-Star break.

I’ll close with this. In 2012 a pitcher went 7 or more innings and allowed 0 ER 363 times. They didn’t earn a win 57 times in those starts. Do we really care about a statistic that says a pitcher who goes 7 or more innings while allowing 0 ER shouldn’t get a win 16% of the time?

I know I don’t.

Victor Martinez Returns Despite Never Leaving

Victor Martinez’s early season statistics weren’t good. Most were pretty bad. He wasn’t getting on base, he wasn’t hitting for power, and because he plays a position that doesn’t utilize a glove, he wasn’t adding value on defense in the way Andy Dirks has done during his own offensive struggles.

But seemingly all of a sudden, Martinez is crushing the baseball. In the last 30 days, Martinez has a very respectable 112 wRC+ (what’s wRC+?). In the last 14 days he has a 170 wRC+. In the last 7 days, it’s 209. That is what the average person would describe as a trend, or perhaps a hot streak. Regardless of the cause or the sustainability of this performance, anyone can look at his numbers and recognize that Martinez’s performance is getting better.

I wrote earlier this year that Victor Martinez was having a particularly extreme case of terrible luck on hard hit balls. He was among the top handful of players in the game at making hard contact, but his batting average and power numbers didn’t reflect that. In fact, he was the only one near the top of the list who wasn’t hitting well above league average.

It made no logical sense that Martinez would make so much hard contact and not reap the rewards. It wasn’t really happening to any other hitter and it doesn’t really happen all that often in general. Hard contact is very highly correlated, and likely the cause of success in the batter’s box. But it wasn’t happening for Martinez?

Why?

The answer is actually so simple that it’s hard to grasp. Nothing was happening. Victor Martinez was doing nothing wrong. He wasn’t chasing bad pitches, he wasn’t hitting a dramatic number of popups or anything. Victor Martinez was the victim, if you can call it that, of something we statisticians call random variation.

Think of it this way. If a player’s true talent level is a .300 batting average, that means that over the course of the season, he’ll get 3 hits for every 10 at bats. But it doesn’t mean that he’ll get 3 hits in EVERY 10 at bats (that would mean performance uniformly distributed). Sometimes he’ll get 2, sometimes he’ll get 4. Sometimes he’ll get 8 and sometimes he’ll go 0 for 15.

Statistics are excellent and wonderful and we love to use them to measure things, but they have to be used properly. You have to understand what they mean. When Martinez was hitting .210, it meant to date he had gotten about 2 hits in every 10, but it didn’t mean that was his true talent level. He’s a .300 hitter in his career, this window was just a low point. A period of “bad luck” if you want to call it that.

Random variation means, in a simple sense and nontechnical sense, that the smaller a sample you look at the higher the likelihood is that you’re observing something that doesn’t reflect reality. Miguel Cabrera gets a hit around 33% of the time in his career, but if you look at any 3 at bats, you’re likely to see him have 0, 2, or 3 hits. That’s how sample size works.

This relates to Martinez because the underlying information about Martinez went unchanged during the slump. He wasn’t chasing pitches and he was making hard contact. The walk and strikeout rate looked fine. Good swings were turning into outs way more frequently than they usually do for him or for anyone.

And then all of a sudden it stopped. Somewhere in the last four to five weeks, Martinez just started getting those swings to turn into hits and he’s climbed all the way up to a .254/.311/.367 line after a .221/.290/.274 line in April. He’s not a different player, he’s just getting his hits to drop now and he wasn’t then. He’s taken two and half months of bad stats and is slowly erasing them.

His numbers were awful. Now they are amazing. Only two things can be responsible for that. One is a change in skill, health, or approach – none of which are evident. The other is a change in fortune – which appears likely. Victor Martinez is the poster child for a concept called “regression to the mean.”

Regression to the mean is an idea that suggests, in baseball, that when a player does something much better or worse than his previous career average it’s likely that he’s going to regress toward the previous average more often than he moves further into the extremes. You can think of regression to the mean as the correction in random variation over large samples.

In a small sample, anything can happen, but if you give something enough time, it will show its true colors. I’m boiling down a complex statistical concept, so well-versed statisticians shouldn’t analyze the wording too literally, but the amazing tear Martinez is on is essentially like the universe balancing out the really unlucky stretch he had.

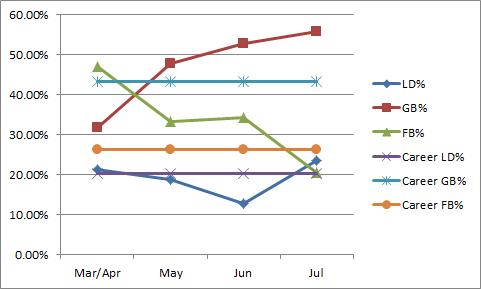

It really is that simple. Take a look at his monthly performance:

It’s getting much better, sure. But there is something in the batted ball data I want you to see. This is a bit cluttered, but take a look.

He was hitting fewer groundballs than normal in April, and now he is hitting more to compensate. He was hitting fewer than normal line drives for the first three months, now his is hitting more. He was hitting way more flyballs that normal to start the year, now he’s hitting fewer. Everything is correcting itself. It’s not that he is now hitting like the career averages he set for himself, it’s that he’s now playing at the other extreme to balance out what happened before. The process for Martinez was good, but the results we all out of whack. Now the process is the same and the results are good.

This is a simple case of regression to the mean. There wasn’t anything wrong with Martinez that time couldn’t fix. The Tigers did just fine while he was “struggling” and now they’re getting the hot-hitting version of him as the race gets going.

In general, this should be a lesson to you that surface statistics can be deceiving. If you thought Martinez was a good hitter entering the season, you shouldn’t change your opinion so quickly when he has a low batting average for six or eight weeks. Almost always, unless a player is hurt (he never looked hurt), he will regress to the mean. He may not ever have the season he had in 2011, as that was likely his career year as a hitter, but he will look very much the player you expected. He’s been a 120 or so wRC+ hitter for most of his career and there is no reason not to expect something around 110 now that he is entering the downswing of his career.

Enjoy Victor’s hitting streak and power explosion now because you certainly earned it while he wasn’t getting hits. It’s often hard to take a step back and see the world with a wide angle lens, but it’s something we should do a lot more often.

A Case Study in Wins

To bring you up to speed I’ve been laying out evidence over the last few weeks in an effort to help banish the pitcher win as a method for measuring individual performance. I’ve covered a number of topics such as:

- Pitchers who had great seasons and didn’t win

- Pitchers who had below average seasons and won a ton

- These numbers not balancing out over an entire career

The simple complaint with the win statistic is that it doesn’t measure individual performance but is used by people to reflect the quality of an individual. Wins are about pitchers, but they are also about run support, defense, the other team, and luck. We shouldn’t use such a blunt tool when measuring performance when we have better ones. I’ve provided a lot of evidence in the links above supporting this claim, but those have posts about the best and worst and about career long samples. Today, I’d like to offer a simple case study from 2012 to illustrated the problem with wins.

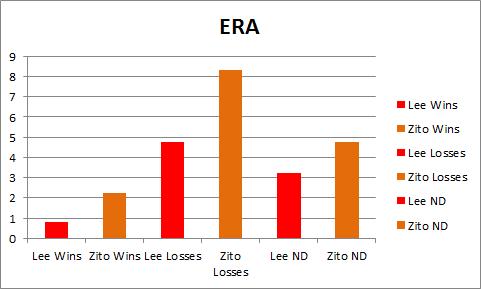

The faces I’ll put on this issue are Cliff Lee and Barry Zito, both of whom appeared on the lists above.

Let’s start with some simple numbers from their 2012 campaigns to get you up to speed. Lee threw more than 25 more inning than Zito and performed better across the board:

Lee had a much higher strikeout rate and much lower walk rate.

Lee had a lower ERA, FIP, and xFIP and if you prefer those numbers park and league adjusted, they tell the same story:

If you’re someone who likes Wins Above Replacement (WAR) or Win Probability Added (WPA) it all points in Lee’s favor as well:

By every reasonable season long statistic, Cliff Lee had a better season than Barry Zito. If you look more closely, you can see that Lee had a great year and Zito had a below average, but not terrible season. There is simply no case to be made that Barry Zito was a better pitcher than Cliff Lee during the 2012 season. None.

But I’m sure you can see where this is going. Cliff Lee’s Won-Loss record was 6-9 and Barry Zito’s was 15-8. Lee threw more innings, allowed fewer runs per 9, struck out more batters, walked fewer batters, and did just about everything a pitcher can do to prevent runs better than Barry Zito and he had a much worse won-loss record. Something is wrong with that. Let’s dig a bit deeper and consider their performances in Wins, Losses, and No Decisions.

Let’s start with something as simple as ERA. In Wins, Losses, and ND, Cliff Lee allowed fewer runs than Zito despite pitching his home games in a park that skews toward hitters and Zito in a park that skews toward pitchers:

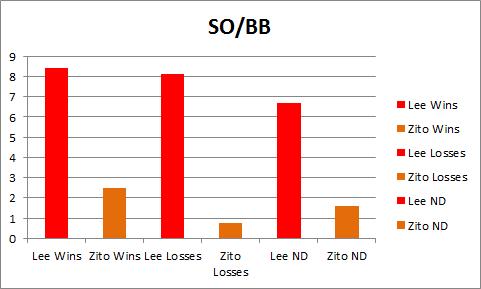

In fact, Lee’s ERA in Losses is almost identical to Zito’s in No Decisions. He allowed the same number of runs when he pitched “poorly” enough to lose as when Zito pitched in a “neutral” way. If we take a look at strikeout to walk ratio, it looks even more lopsided:

Lee way outperforms Zito in the measure even if you put Lee’s “worst” starts up against Zito’s “best” ones. Let’s take a look at OPS against in these starts, and remember, Lee pitches in a hitters’ park and Zito in a pitchers’ park:

Again we find that Lee pitches as well in Losses and Zito does in No Decisions and performs much better across the board. Not only does Lee allow fewer runs in each type of decision, he has a better K/BB rate, and a lower OPS against in pitching environments that should favor Zito.

Everything about their individual seasons indicates that Cliff Lee had a much better season than Barry Zito and when you break it down by Wins, Losses, and Decisions, it is very clear that Lee performed better in all of these types of events. Lee was unquestionably better. No doubt. But Lee was 6-9 and Zito was 15-8. Zito won more games and lost fewer.

If we look at the earned run distribution, you can clearly see that Lee was better overall, on average, and by start:

You likely don’t need more convincing that Lee was better than Zito, in fact, you probably knew that from the start. Lee was better in every way, but Zito’s record was better. How can wins and losses be useful for measuring a player when they can be so wrong about such an obvious case?

Cliff Lee prevented runs better than Zito last season. He went deeper into games. More strikeouts, fewer walks, lower OPS against in a tougher park. He was better than Zito in Wins, Losses, and ND and often better in Losses than Zito was in ND. How can this be? It’s very simple. Wins and Losses aren’t just about the quality of the pitcher, not by a long shot. Even ignoring potential differences in defensive quality (Giants were slightly better) and assuming pitchers can control every aspect of run prevention it still isn’t enough. Lee was better and had a worse record. What good is a pitching statistic if it is this dependent on your offense? It isn’t any good.

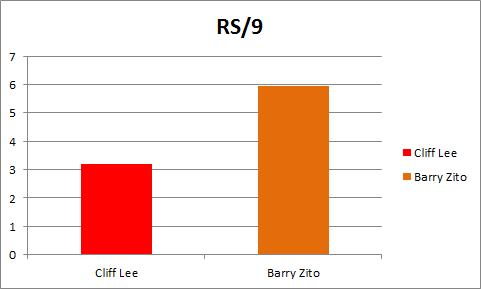

Here friends, are their run support per 9 numbers. This should tell you the whole story:

The Giants got Zito 6 runs a game on average and the Phillies got Lee 3.2. It didn’t matter that Lee way out pitched Zito, he still had no shot to win as many games because the Giants scored runs for Zito and the Phillies didn’t score for Lee. The Giants during the entire season scored 4.4 runs per game. The Phillies scored 4.2. This isn’t as easy as saying that pitchers on better teams win more often. Lee’s team scored much less for him on average and the Giants scored much more for Zito on average.

You can’t just say that a pitcher with a great offense will win more often, it comes down to the precise moments in which they score. How can that possibly have anything to do with the pitchers this statistic hopes to measure? It can’t.

If my global evidence about the subjectivity and uselessness of wins didn’t get you, I hope that this has. There is no justification for using wins to measure pitchers when something like this can happen. Lee was much better than Zito in every way, but if you’re using wins and losses, you wouldn’t know it.

And, just in case you were wondering, Lee was a better hitter too.

Why You Should Give Sabermetrics A Try

A lot of ink has been spilled over the old-school versus new school debate in baseball analysis and while I’m decidedly on the new school side of things, I firmly believe that the reasons we have a difficult time winning converts is because we’re often too quick to act like our views are obviously the right ones. This isn’t a matter of sabermetricians getting the wrong answers, but we don’t often do enough to make our findings clear to the public. Sometimes we get caught up talking to each other and not talking to everyone.

Don’t get me wrong, I love Fangraphs and other sabermetric heavy sites, but we don’t always do the best job of making the basic principles clear. When someone writes a great post at Fangraphs, they don’t explain why they use wOBA instead of OPS or batting average, they take it as a given and expect the reader to know why or to look it up. Which makes less informed baseball fans weary. It’s not that they’re stupid, I don’t think that at all, it’s that they haven’t been given a proper explanation for why we think what we think on this side of the debate.

The sabermetric community offers a lot of resources that explain statistics, but we leave the curious fan with little guidance. It’s not hard to tell why some people here us talking about Wins Above Replacement and start thinking we’re nuts. It’s out job to explain what we’re doing and it’s our job to sell the message correctly. We’ve done so much groundwork in baseball research that we often forget that a new person is learning about the value of walks everyday, and that’s something we just take as a given.

Which is why it’s important for baseball analytics to have a public relations aspect of it too. Brian Kenny from MLB Network and NBC Sports Radio is a great voice for that part of the task. He’s done excellent work bringing sabermetrics into the mainstream of sports coverage. Plenty of others do excellent work on the matter, but he’s made it a mission.

At New English D, we’d like to be a part of that, and often publish basic explanations of sabermetric stats and principles while also pointing out some flaws in the basic stats. Today, I’d like to do something different. Today, I’d like to explain why you should give sabermetrics a try, period. I don’t care how skeptical you are, give me the next 5 minutes.

Here are 5 reasons:

1) The basic statistics were crafted during another era.

Batting average, runs, RBI, SB, wins, ERA, and the other statistics you’re familiar with quite readily were invented in the 1920s to keep track of what happened on the field. They are scoring statistics to record exactly how the game progressed. They’re descriptive and that is great. You can look at a box score and see exactly who was on base and who was at the plate when each run scored, but you can’t always tell which players were most responsible for the win or loss. These stats don’t tell you that much about value. It’s not because these stats are stupid, it’s because they didn’t have calculators and computers to do calculations when these numbers were invented. When you’re using a slide rule or pen and paper to track stats, things have to be simple. They don’t have to be simple anymore because we have the power to compute more information. It doesn’t mean getting a hit with a runner on second isn’t important, it means RBI is a crude way to measure that skill.

2) Progress is good.

Sabermetricians have introduced many new statistics into the world in the last couple decades, and while that might seem unseemly and cluttered, it’s actually no different than anything else. We didn’t use to fly on airplanes or drive cars, we didn’t used to be able to watch any baseball game on the internet. Heck, we didn’t even have the internet until the 1990s. No one is running around telling everyone to write more letters and put them in mailboxes, we have all pretty much embraced e-mail, texting, and instant messaging. Communication got better and more efficient. We’re better off. Baseball analysis is the same way. These new stats tell us more about baseball than we used to know. Players who walk a lot used to be really undervalued until someone with a computer looked at a lot of baseball games and realized that getting on base is really good, whether you get on via a hit or a walk. Things get better when we develop new technologies. You wouldn’t disable your internet connection, don’t immediately shut out new stats.

3) We’re asking the same questions.

Sabermetricians and traditional analysts both care about what leads to wins. Traditional analysts tend to just focus on who wins and loses and reverse engineer the explanations, but sabermetrics is just breaking it down a different way. Let’s go through a little thought experiment:

- How do you win? You score more runs than the other team.

- How do you score more runs than the other team? You score runs and you prevent runs.

- How do you score runs? You get on base.

- How do you get on base? You get a hit or you walk.

- How do you prevent runs? You don’t let the other team get on base.

- How do you keep them off the bases? You don’t allow hits or walks.

- How do you prevent hits? Don’t let them put the ball in play or hit homeruns, so strikeouts are good. You can also induce groundballs and use your defense if they are good.

When you think about the question like that, you realize we’re all asking the same thing. Sabermetricians break it down into how you score and prevent runs and they look for what leads to both of those outcomes. It’s nothing devious or nerdy. It’s 100% about scoring runs and preventing them. We’ve just looked at enough data to know which actions lead to both and which actions don’t. Sometimes there is luck involved and you can’t predict luck. We’re all about playing the odds. That’s no different from anything else, it just looks different because we’re using numbers instead of intuition.

4) More information is good.

Even if you like the old statistics, that doesn’t mean the new ones are wrong. If a player has a high batting average, that tells you something about their performance. But so does their on base percentage. So does their slugging percentage. So does Weighted On Base Average (wOBA). So does Wins Above Replacement (WAR). It’s all information about the players and teams. Sabermetricians like these new stats for a reason. The reason is that they tell us something the other statistics do not. Batting average is fine, but it doesn’t tell you if the player is getting on base via a walk. You might not think walks are as good as hits (we don’t either!), but walks are WAY BETTER than outs. Batting average pretends walks don’t exist and we think that’s silly. RBI tells you how many runs a batter has driven in, but it doesn’t tell you how many opportunities that batter has to drive someone in. It’s not fair to Joey Votto that he hits behind Zack Cozart and Prince Fielder gets to hit behind Miguel Cabrera. Those two players are in different contexts. Sabermetrics likes to provide context neutral information. Players can only control certain aspects of the game and we don’t think it’s right to judge a player on things outside of his control. This is especially true for pitchers, who can’t control how much run support they get, how well their defense plays, or which pitcher is on the mound for the other team. Sabermetrics looks at that and says, wins aren’t a great way to measure a pitcher’s performance because most of what leads to a win is out of their control. Let’s look at what is in their control and see how well they do at that.

5) The logic is exactly the same.

When you look at RBI or Wins or Batting Average to judge a player, you’re using statistical information to make an inference about how good that guy is. You’re taking information recorded in the past to make a claim about the present and future. It doesn’t matter if you’re using your eyes during an at bat or a spreadsheet in January, the logic is the same. Past behavior informs predictions about the future. For sabermetricians, we’re just using a lot more information because we have found that using more information and certain kinds of information tends to help make better inferences. For example, this is where the tired phrase “small sample size” comes into play. We’ve looked at a ton of data and see that a really good batting average over a ten day stretch doesn’t predict what the player will do on day 11 very well. For statistics to reflect true talent, you needs bigger samples. It’s simple logic and you use it every day. If you think a player is about average and then they have two great days, how much do you change your mind? Not much. If you think a player is average and they have six great months, how much? Probably a lot more. Sabermetrics isn’t any different than that, it’s merely crunching the numbers to give us a better estimate about when information starts to become meaningful.

—

If you think about it like that, sabermetrics aren’t that foreign or nerdy. You might need to be a nerd to program a computer to spit out an answer to a question, but you don’t have to be anything but curious to understand what the answer is telling you. It’s isn’t that the old stats are terrible, it’s that they were developed when they had limited power to make sense of a complex game. You wouldn’t want a surgeon trained in the 1920s to operate on you, why let a statistic from 100 years ago inform you. Progress is good. Progress leads to more information and better understanding. You can absolutely disagree with a new stat, but you absolutely cannot disagree with a stat because it’s new. We’re asking the same questions and using the same logic, it’s just about being willing to expand the data you’re willing to use to evaluate those questions. You judge players by batting average, why wouldn’t you look at on base percentage too?

Ultimately, sabermetrics are a way to learn more about baseball and I can’t imagine not wanting to do that. I challenge you to learn more or to help others do the same. We have lots of information on this site under out “Stat of the Week” section and other sites offer much of the same. I’ll even make you a guarantee because I love baseball and learning that much. I will answer any question you have about baseball stats. Hit me on Twitter, in the comments, or on e-mail (See “About” above) and I will explain why I like one stat over another or what the best way is to measure something. Anything. That’s my offer. There’s no excuse not to give it a try, I’m pretty sure you’ll like it.

Stat of the Week: Batting Average on Balls in Play (BABIP)

Batting Average on Balls in Play (BABIP) is one of the most easily understood sabermetric statistics because it can be easily calculated at home like many of the basic descriptive stats, but it is also a very powerful tool. Let’s start with the basic idea (or you can read about it at Fangraphs).

BABIP is exactly what it says it is, a player or pitcher’s batting average (or average against) on balls that are put in play, meaning that strikeouts and homeruns are subtracted from at bats in the denominator while sacrifice flies are added and homeruns are subtracted from the numerator of batting average, it looks like this:

BABIP = (H – HR) / (AB – K – HR + SF)

Sac bunts aren’t included because you’re making an out on purpose, so it doesn’t really belong given that it doesn’t reflect a hitter or pitcher’s skill.

BABIP tells you what percentage of balls hit somewhere the defense could make a play go for hits and can tell us a lot about players. For hitters, defense, luck, and skill determine your BABIP. A good defense playing against you will lower your BABIP because they will catch balls that should be hits, luck will lower or raise your BABIP because sometimes hard hit balls go right at someone, and skill will influence your BABIP because line drive hitters and speedy runners are more likely to have higher BABIPs because they hit the ball in a way that is more likely to result in hits or they leg out infield singles.

We generally think of true talent levels for hitters between .250 and .350 with average being right around .300. If you see someone deviate greatly from .300 or so, there may be a legitimate reason, but it is also very likely about luck. Hitters can influence their BABIP, but BABIP is fluky and takes a while to settle down, meaning that in small samples your BABIP can be quite different from your true talent level. This is what we mean when we say someone’s success is BABIP driven. No one can sustain a .450 BABIP for a whole season, but they can do it for two weeks and that can inflate statistics like batting average and slugging percentage in small samples.

The same is true for pitchers, but it’s even more critical. Pitchers have very little control over what happens to the baseball once it is put in play. Strikeouts, walks, and homeruns rest solely on a pitcher, but once a hitter makes contact it’s out of their hands. Most pitchers will have BABIPs close to .300 and any serious deviation from that number means there is some serious luck or defense involved. Even pitchers who are easy to hit will still have BABIPs closer to average because their defense will still get to a high percentage of balls in play.

Using BABIP is very easy. Hitters can have higher or lower BABIPs based on their skills, but they are unlikely to post very high or very low BABIPs. For example, only 14 hitters in MLB history have BABIPs above .360 for their careers and only 26 hitters since WWII have BABIPs lower than .240. What you want to do is compare a hitter’s season BABIP to their previous seasons to see if it is in line. If you’re jump from a .310 career BABIP to a .360 the next season, it’s likely due for some regression to the mean. BABIP can be predictive like this if there is no underlying change in skill.

For pitchers it’s even better. If a pitcher has a BABIP the deviates heavily from average, it’s almost certainly a function of luck or bad defense.

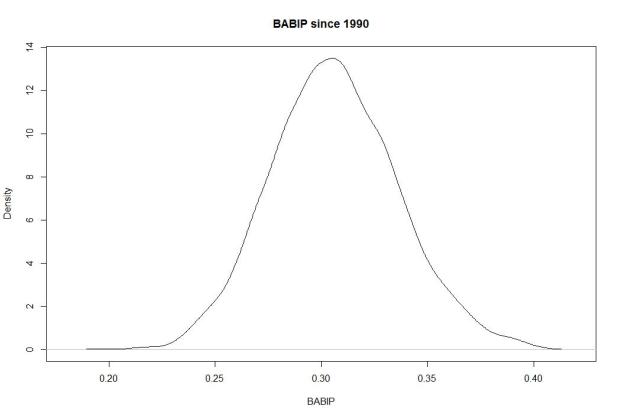

It’s quite straightforward. If someone’s BABIP deviates heavily from .300 and has no history of a high or low BABIP, it means you’re likely looking at something fluky. Here’s a quick demonstration to prove the point. Here is every qualifying hitter season since 1990 by BABIP:

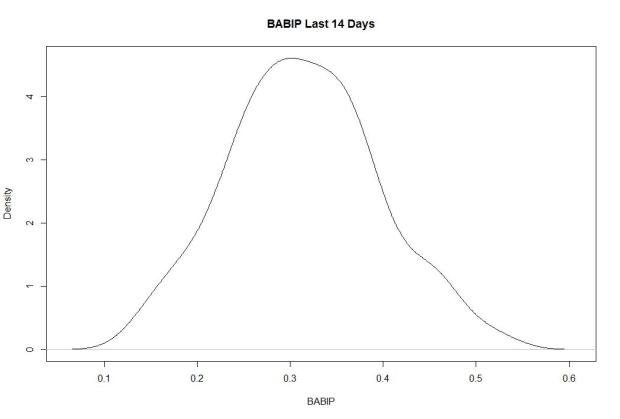

You can see how it centers on .300 and almost never extends beyond .250 and .350. But in small samples, it can be fluky and give you weird results that can inflate your batting average or other numbers. Let’s look at the last 14 days in MLB:

You’ll notice the normalized shape, but also notice the scale across the horizontal axis. Lots of players have BABIPs in the .400 and below .200 over the last two weeks, meaning lots of players are over and underperforming their true talent thanks to luck and random variation.

The takeaway is simple. BABIP is a place to look when deciding if a player’s improved (or worse) results are coming from a real change in skill or good fortune. If the BABIP looks funky, look closer. If the BABIP looks typical, there might be something real going on.

What About Pitcher Wins With A Long Lens?

This season, the debate between those who like using wins to judge pitchers and those who want nothing more than to forget that statistic exists has heated up and we’ve seen the movement heavily publicized by MLB Network’s Brian Kenny, who takes on “wins” on a daily basis.

The argument against using wins is simple. The way pitcher wins are determined does not reflect individual pitcher performance, and therefore is an improper judge of how well someone performed. There are countless examples, most clearly Cliff Lee last season and James Shields and Chris Sale this season. Last week, we took on some of the best seasons ever by pitchers who won 9 or fewer times in a season. So much of what leads to wins is completely out of the pitcher’s control and they shouldn’t be judged based on how many runs their team scored for them. Run support, even if we strip away defense, the opposing pitcher, and dumb luck, is a clear and important factor in how many wins you have.

Last week, I gave you this graph which showed that in the 8,000+ qualifying seasons since 1901, wins did very little to explain overall performance:

But those numbers just reflect single seasons. I started wondering about bigger samples. Pitchers can get really lucky or unlucky in a given start and clearly they can in given seasons, but what about in their careers? Can you fake your way through an entire career of wins? It turns out that you can. Let’s take a look.

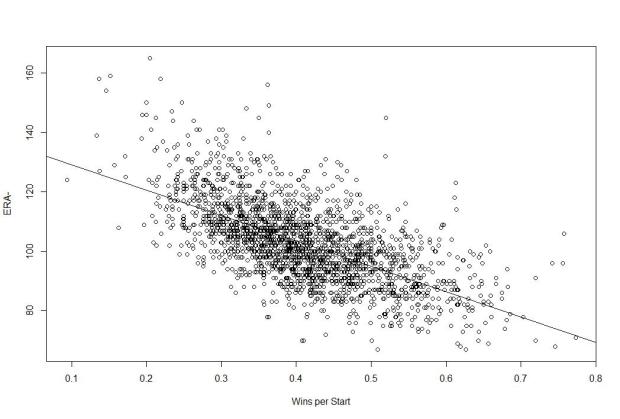

Below is a graph of Wins per Start (so as to control for guys who made 400 starts and guys who make 250 starts) and ERA- (which is simply ERA scaled to league average during that era and adjusted for park effects. Lower ERA- is better and 100 is league average, meaing ERA- of 90 is 10% better than average). What you see here is that wins fare no better in career samples than season ones (sample size of 2,155):

The trend line is clear in that the lower your ERA-, the more frequently you win, but there is significant variation at each point. For example, at a wins per start of 40%, some pitchers have ERA- of 80 and some have ERA- of 120. The adjusted R squared here is .3966, which means that only 40% of the variation in ERA- can be explained by Wins per Start. That’s less than half.

If we used FIP-, which is the scaled version of Fielding Independent Pitching (FIP), the results are even more troubling for wins.

The adjusted R squared here is only .2131, meaning that only about 21% of the variation in FIP- can be explained by Wins per Start. You can win 50% of your starts as the best pitcher of all time or as one of the worst.

The takeaway here is very simple and very important. Your ability as a pitcher to keep the other team from scoring (as seen with ERA-) and your ability to prevent runs based on only that which you can control (FIP-) are not that heavily correlated with winning. You can’t use a pitcher’s wins to predict how good they are because you can win if you prevent runs like a superstar or if you prevent runs like a Triple A long reliever. Even if you strip out defense and the quality of the other offense and give the pitcher credit for every single run he allows, there is still the issue of team run support that he has zero control over.

Last week I provided simple, straightforward evidence for why wins don’t reflect performance over the course of the season, but here I’ve shown that wins don’t even tell you much over the course of an entire career. It’s the job of a starting pitcher to limit the runs they allow, but the ability to limit runs doesn’t correlate very well with how often you win because so much of that is out of your hands.

Wins are not a good measure of individual performance and we should stop using them as such. This isn’t because sabermetricians don’t understand the point of the game, which is to win, but rather because we understand that “wins” as a stat for pitchers tells us nothing about how much they contributed to helping their team win. Pitchers try to prevent runs. That is only half of the game. They shouldn’t be praised or blamed for what happens on the other side.

A New Way To Measure Relief Pitchers: SOEFA

I’ve long been a critic of the save statistic, and I don’t need to rehash why it’s the scourge of the baseball world, but relief pitching is still an important part of the game and we often struggle to properly measure it. Won/Loss record and saves tell you nothing about a player’s individual skill, especially not relievers, and even things like ERA don’t do a lot of good because relievers aren’t charged for runners they let in belonging to another pitcher and can get charged with runs allowed by the pitchers who come after.

Strikeouts, walks, and homeruns allowed (the basis of FIP) are good measurements, but FIP inherently strips away context. And context does matter for relief pitchers. It’s an elite reliever’s job to come in and strand runners, so strikeouts are good and homeruns are bad, but sequencing is really important and it matters a lot when they get outs and when they allow baserunners.

In a sense, FIP and similar statistics are good, but they aren’t perfect because they’re context neutral and we might want some context in reliever stats. Win Probability Added (WPA) is a typical way to fix this, but this feels too context dependent for me. WAR is always a nice combination of these kinds of measures, but WAR is a counting stat so how much a reliever is used matters a lot, and relievers are often used incorrectly.

My point here is not that I can come up with something better, but rather that I want to try to add something. I always look at reliever stats and find logical holes more often than with position players and starters. I want a reliever stat that measures context, considers the peripheral numbers, and also understands the luck involved. I didn’t find one out there that satisfied me, so I went to work inventing one.

I’ll say this. This isn’t perfect and I want to improve it. Flaws you may find in the method should not cause you to discount it, but rather to add to the discussion. This is a first crack. I hope you find it useful.

The Goal

So first, I started with a question: What is the job of a relief pitcher? Here was my answer:

- Strand runners

- Don’t allow baserunners

- If you allow baserunners, don’t let them score.

With that outlined, I went to work thinking about how to measure each and came up with the following statistic that I will call SOEFA, pronounced like “sofa.” It stands for Strand On-base ERA FIP Average and should be thought of as a way to measure relievers from your sofa. Yes, I have a whimsical side.

It has four components, let’s walk through them.

The Formula

First is Strand Rate+, which I calculated as what percent better or worse a reliever is from league average at stranding runners. League average is 70%, so if you strand 100% of your inherited runners, your Strand Rate+ is .43 because you are 43% better than league average.

Second, is your Expected OBP+ or xOBP+ which is your opponents on base percentage calculated as a percentage deviation from league average just like SR+, except that I regress your hits allowed based on league average BABIP because sometimes batters get lucky hits.

Third, is my version or ERA+, which is just like normal ERA- except I scale mine to zero instead of 100 like the major stat sites and invert it. Same principles regarding deviation from average applies. FIP+ is exactly the same, except I use FIP-. These numbers are park adjusted.

To combine them, I add SR+ to xOBP+ and then add ERA+ to get eSOEFA. I then repeat the same process and replace ERA+ with FIP+ to get fSOEFA. A pitcher’s SOEFA score is the average between the two.

The output gives you a number that sets league average at zero and ranges technically from negative infinity to about 2.5, but generally speaking you won’t see a reliever fall below -2.5. Basically it’s a -3 to 3 scale that puts good relievers on the plus side and bad ones on the negative side.

Additionally, at my discretion, relievers who have inherited fewer than five baserunners during the season (this number will likely be fluid based on where we are in the season) are given a league average SR+ so that if you don’t ever inherit runners you aren’t unfairly punished because you are not given sufficient opportunity to strand them or you are not given credit for an awesome strand rate if you strand the only runner you inherit.

I’m pretty happy with the first round of results. The first run of results came from stats entering June 25th and it generally lines up with my impression of the best performing relief pitchers in baseball. I have no idea if this stat is predictive or how long it takes to stabilize. Right now, it correlates with ERA and FIP at -.73 and -.75 despite the fact that each is only 1/6 of the input and the R squared is around .6 using it to predict FIP, if those kinds of things interest you.

It’s experimental. It’s meant to be fun and maybe helpful.

A word of note is that Fangraphs and B-R seem to use different cutoffs for which relievers “qualify,” so this output may be missing a few relievers. I’m sorry about that. The great thing about this statistic is that I can easily produce the number for any reliever in baseball in less than two minutes. If you want to know how a reliever measures up or how a reliever did during a given season, just ask and I can provide the number based on a handy program I wrote. Hit me on Twitter @NeilWeinberg44 and I’d be happy to provide the SOEFA for any reliever.

Thanks for reading and I welcome any feedback. Who knows, maybe this will work.

Below are the SOEFA for the vast majority of qualifying relievers up through 6/24/13. If you want to know the SOEFA of a reliever not on this list or would like an update score, please let me know.

| Rank | Player | Team | SOEFA |

| 1 | Sergio Romo | Giants | 0.99 |

| 2 | Jason Grilli | Pirates | 0.95 |

| 3 | Junichi Tazawa | Red Sox | 0.92 |

| 4 | Kevin Gregg | Cubs | 0.92 |

| 5 | Drew Smyly | Tigers | 0.9 |

| 6 | Joaquin Benoit | Tigers | 0.89 |

| 7 | Jordan Walden | Braves | 0.88 |

| 8 | Robbie Ross | Rangers | 0.87 |

| 9 | Mark Melancon | Pirates | 0.85 |

| 10 | Jesse Crain | White Sox | 0.83 |

| 11 | Edward Mujica | Cardinals | 0.79 |

| 12 | Brett Cecil | Blue Jays | 0.79 |

| 13 | Greg Holland | Royals | 0.75 |

| 14 | Oliver Perez | Mariners | 0.74 |

| 15 | Trevor Rosenthal | Cardinals | 0.74 |

| 16 | Kenley Jansen | Dodgers | 0.72 |

| 17 | Glen Perkins | Twins | 0.71 |

| 18 | Koji Uehara | Red Sox | 0.7 |

| 19 | Preston Claiborne | Yankees | 0.69 |

| 20 | Sam LeCure | Reds | 0.68 |

| 21 | Casey Janssen | Blue Jays | 0.64 |

| 22 | Mariano Rivera | Yankees | 0.63 |

| 23 | Luke Gregerson | Padres | 0.62 |

| 24 | Craig Kimbrel | Braves | 0.62 |

| 25 | Sean Doolittle | Athletics | 0.6 |

| 26 | Edgmer Escalona | Rockies | 0.56 |

| 27 | Tommy Hunter | Orioles | 0.56 |

| 28 | Brad Ziegler | Diamondbacks | 0.54 |

| 29 | Joe Nathan | Rangers | 0.53 |

| 30 | Joe Smith | Indians | 0.53 |

| 31 | Vin Mazzaro | Pirates | 0.51 |

| 32 | Jim Henderson | Brewers | 0.5 |

| 33 | James Russell | Cubs | 0.49 |

| 34 | Casey Fien | Twins | 0.48 |

| 35 | Tim Collins | Royals | 0.47 |

| 36 | Shawn Kelley | Yankees | 0.47 |

| 37 | Brian Matusz | Orioles | 0.46 |

| 38 | Addison Reed | White Sox | 0.46 |

| 39 | Tanner Scheppers | Rangers | 0.45 |

| 40 | Rafael Soriano | Nationals | 0.44 |

| 41 | Aroldis Chapman | Reds | 0.44 |

| 42 | Joel Peralta | Rays | 0.43 |

| 43 | Matt Reynolds | Diamondbacks | 0.43 |

| 44 | Brandon Kintzler | Brewers | 0.43 |

| 45 | Ryan Cook | Athletics | 0.42 |

| 46 | Chad Qualls | Marlins | 0.42 |

| 47 | Cody Allen | Indians | 0.4 |

| 48 | Andrew Miller | Red Sox | 0.4 |

| 49 | David Robertson | Yankees | 0.38 |

| 50 | Seth Maness | Cardinals | 0.36 |

| 51 | Bobby Parnell | Mets | 0.36 |

| 52 | Matt Belisle | Rockies | 0.36 |

| 53 | Josh Outman | Rockies | 0.36 |

| 54 | Rex Brothers | Rockies | 0.35 |

| 55 | Jonathan Papelbon | Phillies | 0.35 |

| 56 | Dale Thayer | Padres | 0.35 |

| 57 | Darren O’Day | Orioles | 0.33 |

| 58 | Justin Wilson | Pirates | 0.33 |

| 59 | Luke Hochevar | Royals | 0.31 |

| 60 | Grant Balfour | Athletics | 0.3 |

| 61 | John Axford | Brewers | 0.29 |

| 62 | Ernesto Frieri | Angels | 0.29 |

| 63 | Drew Storen | Nationals | 0.27 |

| 64 | Bryan Shaw | Indians | 0.26 |

| 65 | Nate Jones | White Sox | 0.26 |

| 66 | Luis Avilan | Braves | 0.25 |

| 67 | Anthony Varvaro | Braves | 0.25 |

| 68 | Anthony Swarzak | Twins | 0.24 |

| 69 | Paco Rodriguez | Dodgers | 0.24 |

| 70 | Jean Machi | Giants | 0.2 |

| 71 | Tyler Clippard | Nationals | 0.19 |

| 72 | Matt Thornton | White Sox | 0.19 |

| 73 | Steve Delabar | Blue Jays | 0.18 |

| 74 | Craig Stammen | Nationals | 0.17 |

| 75 | Tony Watson | Pirates | 0.17 |

| 76 | Pat Neshek | Athletics | 0.16 |

| 77 | Jamey Wright | Rays | 0.16 |

| 78 | J.P. Howell | Dodgers | 0.16 |

| 79 | Cesar Ramos | Rays | 0.15 |

| 80 | Alfredo Simon | Reds | 0.15 |

| 81 | Troy Patton | Orioles | 0.15 |

| 82 | Matt Lindstrom | White Sox | 0.14 |

| 83 | Jim Johnson | Orioles | 0.12 |

| 84 | Carter Capps | Mariners | 0.11 |

| 85 | Ryan Pressly | Twins | 0.11 |

| 86 | Steve Cishek | Marlins | 0.11 |

| 87 | Darin Downs | Tigers | 0.1 |

| 88 | Antonio Bastardo | Phillies | 0.09 |

| 89 | Charlie Furbush | Mariners | 0.07 |

| 90 | Brian Duensing | Twins | 0.07 |

| 91 | Yoervis Medina | Mariners | 0.07 |

| 92 | Jerry Blevins | Athletics | 0.07 |

| 93 | Tom Gorzelanny | Brewers | 0.06 |

| 94 | Jared Burton | Twins | 0.05 |

| 95 | Jose Veras | Astros | 0.05 |

| 96 | Joe Kelly | Cardinals | 0.05 |

| 97 | David Hernandez | Diamondbacks | 0.04 |

| 98 | Ryan Webb | Marlins | 0.04 |

| 99 | Aaron Loup | Blue Jays | 0.03 |

| 100 | Wesley Wright | Astros | 0.01 |

| 101 | Bryan Morris | Pirates | 0.01 |

| 102 | Burke Badenhop | Brewers | 0 |

| 103 | Dane de la Rosa | Angels | -0.02 |

| 104 | Adam Ottavino | Rockies | -0.04 |

| 105 | LaTroy Hawkins | Mets | -0.04 |

| 106 | Cory Gearrin | Braves | -0.06 |

| 107 | Joe Ortiz | Rangers | -0.08 |

| 108 | Wilton Lopez | Rockies | -0.08 |

| 109 | Brandon Lyon | Mets | -0.08 |

| 110 | J.J. Hoover | Reds | -0.08 |

| 111 | Mike Dunn | Marlins | -0.09 |

| 112 | Fernando Rodney | Rays | -0.1 |

| 113 | Hector Ambriz | Astros | -0.1 |

| 114 | Paul Clemens | Astros | -0.13 |

| 115 | Tom Wilhelmsen | Mariners | -0.13 |

| 116 | Matt Guerrier | Dodgers | -0.13 |

| 117 | Josh Roenicke | Twins | -0.17 |

| 118 | Jose Mijares | Giants | -0.21 |

| 119 | Michael Gonzalez | Brewers | -0.23 |

| 120 | Jonathan Broxton | Reds | -0.25 |

| 121 | Jake McGee | Rays | -0.25 |

| 122 | Matt Albers | Indians | -0.26 |

| 123 | A.J. Ramos | Marlins | -0.26 |

| 124 | Scott Rice | Mets | -0.29 |

| 125 | Nick Hagadone | Indians | -0.31 |

| 126 | Travis Blackley | Astros | -0.33 |

| 127 | Vinnie Pestano | Indians | -0.34 |

| 128 | George Kontos | Giants | -0.35 |

| 129 | Mike Adams | Phillies | -0.39 |

| 130 | Clayton Mortensen | Red Sox | -0.4 |

| 131 | Garrett Richards | Angels | -0.43 |

| 132 | Heath Bell | Diamondbacks | -0.46 |

| 133 | Esmil Rogers | Blue Jays | -0.5 |

| 134 | Ronald Belisario | Dodgers | -0.51 |

| 135 | Jeremy Affeldt | Giants | -0.55 |

| 136 | Brandon League | Dodgers | -0.55 |

| 137 | Jeremy Horst | Phillies | -0.58 |

| 138 | Kelvin Herrera | Royals | -0.67 |

| 139 | Carlos Marmol | Cubs | -0.72 |

| 140 | Huston Street | Padres | -0.82 |

| 141 | Anthony Bass | Padres | -0.94 |

| 142 | Hector Rondon | Cubs | -1.24 |